What Are We Looking At When We Look at AI Art?

Written by Zelalem Gizachew

In 2023, I was scrolling through Instagram and I stopped on a post by Girma Berta. The saturated figures, the color blocks, the long Addis shadows. But this post was something else. The images looked like Lalibela had been folded into a dream. Stone churches melting into portraiture. Painterly, impossible, and somehow completely his. I remember thinking he had pushed his craft to a level I could not have imagined. I started typing a comment underneath the post. Something like, you've raised the bar again.

Then I saw the hashtag. #midjourney.

I stopped typing. I went to Google. An hour later I was inside Midjourney for the first time.

The first prompt I used came from a note I had written to myself months earlier. It was a line about solitude. If you have plans to do something, don't

look for someone to tag along. The possibility that someone could disrupt your plans is high and you will be disappointed. Portraiture. That was the whole prompt. A thought pulled from my notes app with the word portraiture added at the end.

What came back was a painted face. A bearded man looking down. Thick teal coat, yellow scrubbed into the background, the brushwork visible and imperfect, the kind of oil portrait that would sit comfortably in a gallery. It had not existed before I typed that sentence. It existed now.

Midjourney, 2023. First generation.

The feeling wasn't confusion. It was release.

Not because something entirely new had arrived, but because something that had always been there, quietly, had become visible. The mechanics of how images come into being. The structure behind what we call creativity.

I have been using AI almost every day since that afternoon. The question everyone asks is is this real? It gets asked in every conversation, often as a challenge. Fair enough. But it was not the one I was sitting with.

The question I kept coming back to was simpler and stranger. How is this possible at all? How does a machine, working on a laptop in my kitchen, produce a face that looks like it was painted by a person with oil, a brush, and fifty years of practice? What exactly is it doing?

When I took part in curating Tales and Dreams, my intention was not to settle the first question. That argument is already saturated and often shallow. I wanted the exhibition to do something quieter. I wanted to make a space where people could sit with the second one.

This is not about a new tool. This is about a new way of seeing something that was always happening.

After my first Midjourney session, I spent weeks trying to understand how any of this could work. I had training in computer science, which helped at a basic level, but this was a craft I had always thought of as mystical. Oil paint on canvas. The hand. The years of looking. How was a computational process producing anything that looked like that?

That is how I found Margaret Boden.

I kept coming back to her because she takes something we treat as mysterious and breaks it down into something you can actually look at. She argues that creativity is structured in ways we can describe. This was the first moment in my reading where art started feeling less magical and more precise. Not a loss of wonder. The wonder moved. Instead of being impressed by the mystery, I was impressed by the structure underneath it.

Reading Boden opened a larger road for me into computational philosophy. People like Joscha Bach think about perception, cognition, even the self as computational processes. I am still learning in that space and do not pretend to have settled views. But it made intuitive sense to me, coming from a computer science background, that much of what we do as humans can be broken down and modeled. Whether that modeling will ever reach what a human body can do with paint and time is a different question. Nobody knows yet. The technology is still moving.

Since my first Midjourney session, I have given trainings on AI to architects, to advertisers, to teachers at my kids' school, to friends at the House of Culture. I have gone on TV to talk about what it could do. I have not been neutral about any of it. I was, and still am, defiantly curious.

Boden identifies three forms of creativity. In practice they bleed into each other, but it helps to look at them separately.

The first is combinational creativity.

This is the most familiar. It is what happens when we take existing things and bring them together in new ways. Mulatu Astatke folding the Ethiopian pentatonic scale into jazz harmonic structure is combinational. Rophnan layering bati scale over EDM is combinational. Elias Sime arranging discarded electronic components into tapestries that reference traditional weaving is combinational. Aida Muluneh building photographs that combine body paint, Ndebele-influenced color blocking, and Ethiopian symbolism into a single composed frame is combinational. Even cultural identity itself is combinational, built through layers of influence, memory, and exposure.

AI systems do this constantly. They recombine styles, forms, and references at a scale no single person can.

The second is exploratory creativity.

This is when the creator works inside a defined space and pushes against its edges. A photographer returning to the same subject year after year, finding new compositions within it. A musician staying inside one scale but finding new expressions through it. Girma Berta has done this for years, refining a visual language of silhouetted figures against saturated colorblocks across series after series. The space is his. The variations inside it are where the work happens.

This is where AI moves most precisely. A model learns a space of possibilities, say portraits or landscapes, and then moves around inside it, generating variations that feel new but stay consistent with the structure underneath.

The third is transformational creativity.

This is the rarest form. It does not just explore or combine. It changes the rules themselves. The shift from figurative painting to abstraction. The invention of perspective in the Renaissance. The emergence of an entirely new visual language. In Ethiopian art, Gebre Kristos Desta broke from the centuries-old iconographic tradition and brought abstract modernism into the Ethiopian canon in the 1960s. Skunder Boghossian fused surrealism with Ethiopian spiritual and mythological imagery in a way that opened a new pictorial grammar for artists who came after him. Both stepped outside the system they inherited and made the next generation's work possible.

Both humans and machines struggle here. It requires stepping outside the system, not just working harder inside it.

When we look at AI-generated images today, we are mostly seeing the first two at work. Not imagination in the romantic sense, but combination and exploration, executed with a precision and speed a human body cannot match.

Let's look at how the process actually works. Because once you see it, something shifts.

These systems are trained on vast numbers of images, not to understand them the way we do, but to learn patterns. How shapes relate. How forms tend to appear together. When a model generates an image, it is not expressing an idea. It is navigating a landscape of possibilities it has learned.

At a technical level, many of these systems begin with noise. Literally randomness. Imagine a canvas filled with static, like an untuned television. The model has already been trained beforehand by taking real images and gradually corrupting them with noise, step by step, until the original image disappears. Through this, it learns at each stage of corruption what the underlying structure likely was.

Generation is the reverse of that process.

Starting from pure noise, the model predicts, at each step, what part of that noise does not belong. It subtracts small amounts of randomness and replaces them with structure, guided by probabilities it learned during training. If you prompt it with "a woman in white surrounded by doves," that text is converted into numerical signals that steer the process, nudging the image toward certain shapes, textures, and arrangements.

This happens iteratively. Vague forms first, then rough composition, then finer details, until the image stabilizes into something recognizable.

The thing that caught me when I first understood this was how local the process is. At no point does the model see the full image the way we do. It is constantly making small predictions, pixel by pixel, region by region, about what is statistically consistent with both the noise it started from and the prompt it was given. The whole image emerges from thousands of these small bets.

In simple terms: training teaches the model how images break apart. Generation uses that knowledge to reconstruct how images come together.

What looks like creation is, technically, controlled reconstruction.

Not all prompts are concrete.

If you write "a woman surrounded by doves," the model has clear anchors. Objects, forms, compositions it has seen many times. It can map words to visual patterns with relative precision.

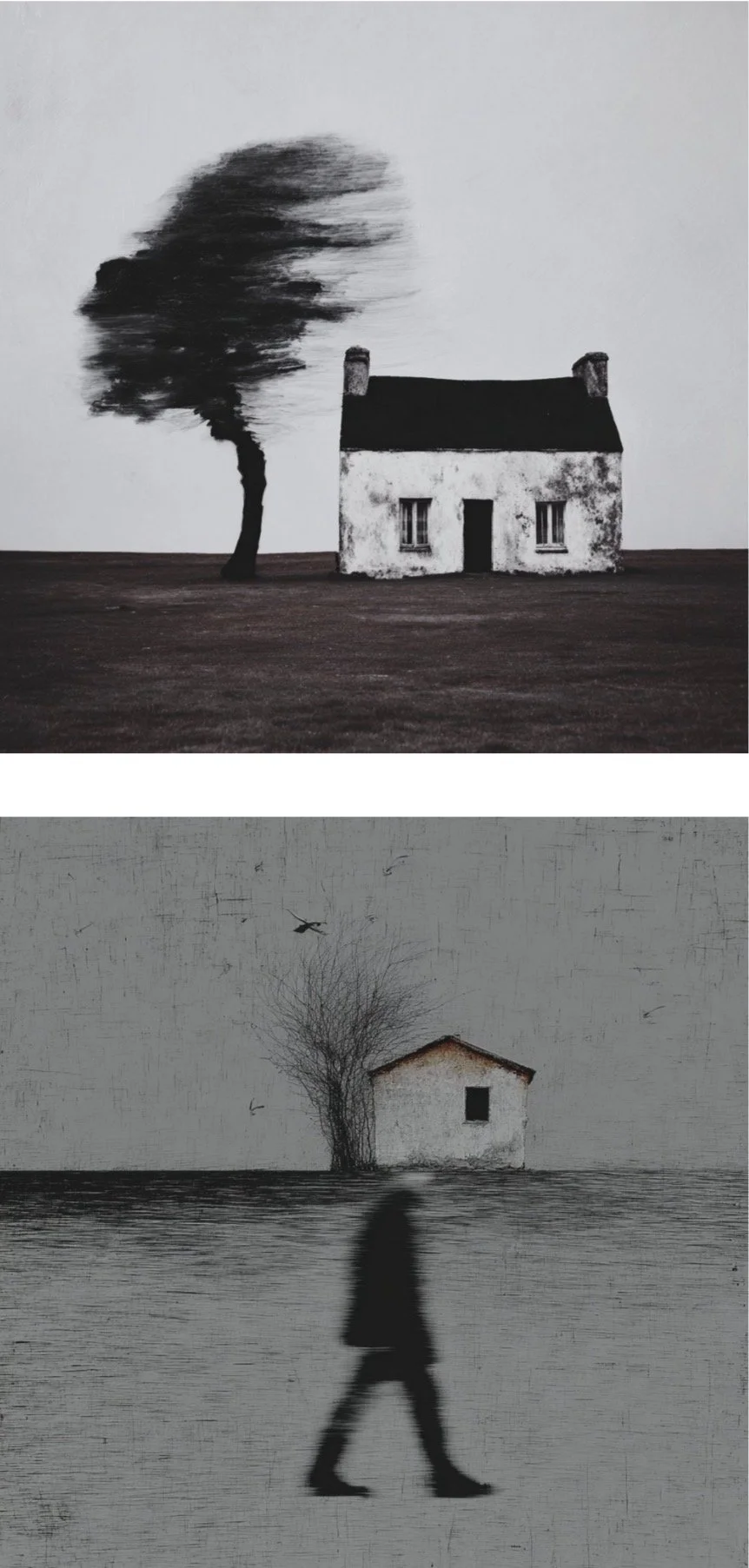

But what about a prompt like "The loss of home and the illusion of change of times"? A sentence I am making up now, the kind of abstract line that has no direct visual equivalent. I have typed prompts like this myself, many times, just to see what would come back. The images that return are rarely what you expected, and yet they rarely feel wrong.

So what is the model actually doing when there is no picture to aim at? It breaks the sentence into fragments it can work with, not by

understanding meaning, but by mapping associations learned from images and text. Home might pull toward interiors, houses, warmth, familiarity. Loss might correlate with emptiness, distance, absence, decay. Illusion might lean toward distortion, blur, surreal compositions. Change of times might connect to aging, contrast, transition, historical textures.

None of this is meaning in the human sense. It is closer to different associations pulling the image in different directions.

The model forms a kind of weighted field where some elements pull toward structure and others toward atmosphere. The prompt becomes a tension between these forces rather than a single clear instruction. When I run the same abstract prompt multiple times, I get very different images back. Each one is a different resolution of the same tension.

Three generations from the same prompt: "The loss of home and the illusion of change of times."

Such images feel symbolic. Not because the machine understands the idea, but because it assembles visual proxies for its components, layered together from patterns it has seen before.

What feels like emotion or depth is not coming from intention. It is emerging from the density of these overlapping associations.

And yet when we look at it, we are almost compelled to feel like something intentional is there. We read into it. We connect it to memory. We assign meaning.

The machine constructs the surface. We construct the significance.

This is where it gets harder for me.

Once you can describe the process this clearly, something else starts to surface. Not as an objection, but as a question I have not fully answered.

A painting is not only an image. It is also a record of the time it took to become one. The hours. The hand that grew tired. The brushstrokes that could not be undone. The places where the paint got too thick, the proportion that drifted slightly, the line that trembled. These are not failures of the work. Often they are the work. Traces of a body moving through time, leaving evidence of its passage.

A painting is not only a composition on a surface. It is also a record of cost. And that cost, once seen, becomes part of what the painting is.

AI images do not carry this kind of cost in the same way. A prompt of a few words can produce, in seconds, something that resembles weeks of labor. The image and the making of the image have been separated in a way they never were before. Even photography preserved the moment of capture as a real event in the world. Someone had to be somewhere, at some time, with a body. My own analog practice still holds me to that. I load the film. I wait for the light. I carry the cost of the mistakes. Film gets scratched. Light leaks where I did not expect it. Some of the images I like best are the ones where the imperfection is what makes them specific to the roll, the camera, the afternoon. The image exists because I was in the room with it. AI generation loosens even that thread.

This does not make the resulting images empty. But it raises a question the frame of "combination and exploration" cannot quite hold.

What part of what we value in art is the image itself, and what part is the evidence of what it cost to make?

My first Midjourney image, from 2023, had thickness. The paint looked like paint. The proportions drifted. The face carried something uneven, whichwas part of why I believed it. It looked like someone had spent time on it, even though no one had.

Midjourney, 2026. Another recent generation from the same prompt.

Three years later, in 2026, I used the same prompt again in a current version of Midjourney, with a personal style I had developed over hundreds of generations. The new image came back cleaner. More resolved. A modernist portrait, geometric, almost architectural.

Midjourney, 2026. Same prompt, latest version.

Both carry traces of what reads like labor. But they are different kinds of traces. The 2023 image wears its imperfection on the surface. The 2026 images are more stylized and more certain of themselves. The visible uncertainty is gone. Something about the older image still feels closer to a human hand, even though none of them came from one.

Here is what I have been sitting with. At the moment, image generation models produce outputs that sometimes carry this trace. Music generation is different. If you have listened to Suno or Udio or any of the current music models, you have probably noticed how flat they sound. The songs are technically clean. They are also averaged. They gravitate toward the statistical middle of a genre and sit there. Nothing is wrong with any note, but nothing is only itself either. Music models are where image models were two or three years ago.

I do not know why the gap exists. Maybe music is harder because it unfolds in time, and time is part of where labor lives. Maybe it is because music training data is smaller, or cleaner, or more constrained. Maybe image models caught up faster because we are more forgiving of visual oddness than of musical oddness.

But if image generation has moved from clearly averaged to sometimes carrying traces of specificity in three years, what happens in the next three? What happens when music models close the same gap? And what does it mean that the "mark of labor" seems to be something a model can learn to produce, not some essential property of human making?

I do not have an answer. Someone who thinks more carefully about labor than I do will have to work that out. But it has stopped me from claiming that AI and human processes are pointed in opposite directions. Today they often are. Tomorrow, I am not sure.

What do we lose when models average? And what is the meaning of a mark that no one else would have made, if a machine can also learn to make it?

Standing in this exhibition, you are not just looking at images. You are looking at a system that returns something to you. Part of what it returns is a picture. Part of it is the outline of what the picture cannot carry.

And that outline lands differently in a place like ours.

In Addis, identity is never singular. It is always layered, often negotiated, and sometimes imposed. Languages pulled from different regions. Aesthetics shaped by faith and migration and trade. Histories that do not agree with each other and still have to share a room.

If all of that were reduced into patterns, into a space of possibilities, what would emerge? What combinations would repeat? What gets erased, flattened, or dulled when difference is turned into pattern? What refuses to be captured at all?

These are not questions the machine can answer. But they are questions I have to carry as someone who helped put this exhibition together. When we show AI-generated work in a gallery, when we place it inside the same category we use for paintings and sculptures and photographs, we are doing something to the category itself. Not necessarily something bad. But something. The boundaries of what we call art quietly shift to include what this new kind of system can produce. And over time, there is a risk that what the system can produce becomes what we expect art to look like. An exhibition like this one participates in that shift whether it intends to or not.

I do not know how to resolve that. I am not sure it needs to be resolved. But it seems worth naming inside the room where it is happening.

So rather than asking whether this is art, I find it more useful to sit with the questions the work keeps opening.

What is the meaning of labor, of imperfection, of the

mark that no one else would have made?

What gets left outside a category when we widen it?

And if we agree that creativity can be partially

broken down and modeled, what, exactly, are we

still holding onto as ours?

Tales and DreamsApril 25 – May 17 · 10am to 7pm dailyArtawi Gallery · KKARE Homes, 2nd Floor, Bole